Programmatic SEO in 2026: How to Build Thousands of Pages That Rank

Programmatic SEO is the practice of creating large numbers of similar pages at scale — using templates, databases, and automation — each targeting a specific, distinct keyword. It’s the strategy behind sites like Zillow (one page per property), Yelp (one page per business), Nomadlist (one page per city), and Canva (one template page per use case). When executed correctly, programmatic SEO can produce thousands of ranking pages from a single well-designed template-and-data system.

In 2026, programmatic SEO has evolved. The simple template-and-scraped-data approach that worked in 2019 now triggers Google’s Helpful Content system. Modern programmatic SEO requires genuine data depth, useful functionality, and content quality — but the scaling mechanics still work powerfully for the right use cases.

Programmatic SEO Fundamentals

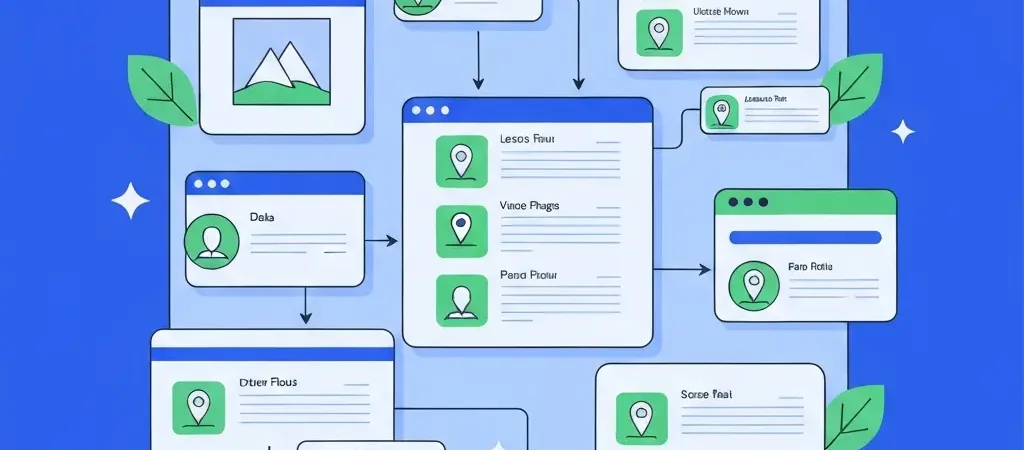

Programmatic SEO works by identifying a keyword pattern with many variations, then creating a unique page for each variation automatically.

The Core Formula

Head term + modifier = programmatic keyword

- “Best restaurants in [city]” → one page per city (Yelp’s model)

- “[Software] alternatives” → one page per software product (G2’s model)

- “[Job title] salary in [city]” → one page per job/city combination (Glassdoor’s model)

- “[Color] [product] ideas” → one page per color/product combination (Pinterest’s model)

Required Components

Every programmatic SEO implementation needs:

- A keyword pattern with genuine search demand across many variations

- A data source that provides unique, accurate data for each page

- A template that structures the data into a useful, navigable page

- Quality controls that prevent thin pages from being published

- Indexing management to prevent Google from crawling thousands of low-quality variations

Best Use Cases for Programmatic SEO

Location-Based Content

Any service, information, or product with location-specific relevance is a strong programmatic candidate: “best [service] in [city],” “[property type] for sale in [neighborhood],” “[event type] venues in [city].” Each location variant has unique data (different businesses, prices, characteristics) that makes individual pages genuinely useful.

Product Catalog SEO

E-commerce sites create category and subcategory pages programmatically: “[brand] [product type],” “[color/size] [product name],” “[use case] [product type].” Amazon, Etsy, and eBay run programmatic SEO at enormous scale, with millions of pages covering every product attribute combination.

Comparison and Alternative Pages

“[Tool A] vs [Tool B]” or “[Tool] alternatives” pages scale programmatically across software categories. G2, Capterra, and SoftwareAdvice built significant traffic using this model. The unique data per page comes from user reviews, feature comparisons, and pricing data.

Data and Statistics Pages

“[Metric] statistics for [industry/year],” “[salary] data for [role] in [location].” Each page aggregates publicly available or proprietary data for a specific data query, providing genuine research utility.

Building Programmatic Pages That Rank

Step 1: Identify Your Programmatic Keyword Pattern

Use keyword research tools to find head terms with high variation potential. Look for: 50,000+ combined monthly searches across all variations, at least 100 viable distinct variations (locations, products, comparisons), and individually low-competition per variation (most long-tail programmatic keywords have very low KD).

Step 2: Source Your Data

Identify where the unique data for each page will come from:

- Public data APIs: government data, geographic APIs, financial data feeds

- User-generated content: reviews, listings, profiles (requires a product/community to seed)

- Proprietary research: original surveys, database compilations, manual data collection

- Third-party partnerships: licensed data from industry sources

- Web scraping (with permission): aggregating publicly available data with appropriate attribution

Step 3: Build Your Template

Design a template that: (1) answers the query directly at the top, (2) presents the unique data in a structured, scannable format, (3) includes contextual editorial content that adds interpretation beyond raw data, and (4) links to related pages in the same programmatic cluster.

Step 4: Implement Quality Gates

Set minimum data thresholds for publishing. Only publish pages where you have sufficient unique data to make the page genuinely useful. Pages where data is sparse, repetitive, or low-quality should be tagged as noindex until they can be enriched, not published thin.

Step 5: Manage Indexing

Submit your highest-value programmatic pages first via sitemap. As your data quality improves and pages earn rankings, gradually open indexing to additional page variants. Use X-Robots-Tag: noindex on thin or unverified pages until they’re ready.

Programmatic SEO and Google’s Quality Standards

Google’s Helpful Content system (introduced in 2022, significantly strengthened in 2023–2025) specifically targets low-quality programmatic pages. Sites with large numbers of thin programmatic pages have received site-wide ranking demotions — affecting all pages on the domain, not just the programmatic ones.

Quality Standards for 2026 Programmatic SEO

- Each page must contain meaningfully different content — not just variable substitution in a sparse template

- Data must be accurate, current, and more useful than what users could find elsewhere

- Pages should include functionality or tools that add value beyond static content (calculators, maps, filters, search)

- AI editorial content can supplement data-driven pages to add interpretation, context, and expertise

Combining Programmatic and AI Editorial Content

The most sophisticated programmatic SEO implementations in 2026 combine template-driven data pages with AI-generated editorial content specific to each variation. This hybrid approach satisfies Google’s quality requirements while maintaining the scale that makes programmatic SEO valuable.

The model: a programmatic framework provides the data structure and page infrastructure, while an AI content system like Authenova generates unique editorial commentary for each page variation. For a “restaurants in [city]” page, Authenova might generate a 300-word introduction that contextualizes the restaurant scene in that specific city — unique content that differentiates each programmatic page from pure template-swapping.

This hybrid approach scales better than pure editorial content production (you can’t write 10,000 unique city guides manually) while meeting higher quality standards than pure data-driven programmatic pages (unique AI commentary prevents thin content flags).

Academic platforms like Tesify use a related hybrid approach — combining standardized structural guides (programmatic in structure) with AI-generated unique content for each academic topic variant, covering hundreds of specific academic writing challenges across multiple language markets simultaneously.

Frequently Asked Questions

Is programmatic SEO still effective in 2026?

Yes — programmatic SEO remains highly effective when implemented with genuine data quality and content depth. Sites like Zillow, Yelp, and Nomadlist continue to drive enormous organic traffic through programmatic pages. The tactics that no longer work are thin programmatic implementations with minimal unique content per page. The bar for quality has risen significantly since 2022, but programmatic SEO with rich data and AI editorial content continues to scale extremely well.

How many pages can you create with programmatic SEO?

There’s no technical upper limit — some sites have millions of programmatic pages. The practical limit is data quality: only publish pages where you have sufficient unique, accurate data to make them genuinely useful. A well-implemented programmatic SEO strategy might create 1,000–100,000 pages for a regional service business, or millions for a national data aggregator. The key constraint is quality, not quantity.

What tools are needed for programmatic SEO?

Core tools for programmatic SEO: a headless CMS or database-driven site architecture (WordPress with custom post types, Webflow CMS, or custom-built), keyword research tools (Ahrefs or Semrush) to validate search demand across variations, data management systems (Airtable, Google Sheets, or custom databases), an AI content platform (Authenova) for editorial layer generation, and SEO crawling tools (Screaming Frog) to monitor page quality and indexing status at scale.

How do you prevent programmatic pages from cannibalizing each other?

Programmatic page cannibalization — where similar pages compete against each other for the same query — is prevented through clear keyword differentiation at the template design level. Each page variant should target a genuinely distinct keyword intent. If two page variants would rank for the same query (e.g., “New York City restaurants” and “restaurants New York”), consolidate them into one page with canonical tags pointing to the preferred version.

What’s the difference between programmatic SEO and AI content generation?

Programmatic SEO creates pages from templates populated with structured data — it scales through data variation rather than unique content writing per page. AI content generation creates unique editorial content for each target keyword — it scales through AI writing rather than data variation. They’re complementary approaches: programmatic SEO excels for data-rich, structured content (listings, comparisons, statistics); AI content generation excels for editorial guides, tutorials, and analysis. Many advanced SEO programs combine both.

Add AI Editorial to Your Programmatic SEO

Authenova complements programmatic SEO strategies by generating unique editorial content that differentiates programmatic pages and satisfies Google’s quality requirements — at the scale programmatic strategies demand.