Programmatic SEO in 2026: How to Build 10,000 Pages That Actually Rank

Programmatic SEO (pSEO) is the strategy of automatically generating large numbers of keyword-targeted pages from structured data and reusable templates — allowing a single team to publish thousands of ranking pages without writing each one individually. When executed correctly in 2026, programmatic SEO drives the kind of compounding organic traffic that no link-building campaign can replicate. Zapier generates over 306,000 monthly organic visitors from approximately 800,000 template pages. Airbnb has over 1.1 million listing pages indexed and ranking. Canva has millions of template pages generating long-tail traffic for free.

However, programmatic SEO in 2026 operates in a fundamentally different risk environment than it did in 2023 or 2024. Google’s March 2026 core update explicitly named “scaled content abuse” as a violation — and sites generating thousands of near-identical pages through AI or template automation without genuine added value saw ranking losses of 60–90% almost overnight. The question is no longer “can I build 10,000 pages?” — it is “how do I build 10,000 pages that Google rewards rather than penalizes?”

This guide answers that question with a complete, post-March-2026 programmatic SEO playbook: from data sourcing and template architecture through quality gates, content velocity benchmarking, and real-world case studies.

Programmatic SEO is the automated creation of large numbers of keyword-targeted pages using structured data and templates. In 2026, effective pSEO requires genuine data differentiation per page — unique, verifiable data that serves searcher intent — not keyword-swapped clones. Sites with real data differentiation see 40–60% of published pages earn organic traffic. Sites without it face Google’s scaled content abuse penalties.

The pSEO Landscape After March 2026

According to Search Engine Land’s analysis of the March 2026 core update, sites that relied on AI or template automation to generate near-identical pages lost 60–90% of their rankings within weeks of the update rollout. The update explicitly targeted three patterns: (1) pages with identical text except for city or keyword substitutions, (2) auto-generated pages with no verifiable unique data, and (3) pages that duplicate existing content at a different URL without added value.

What survived — and in many cases thrived — was programmatic SEO built on genuine data differentiation. According to Digital Applied’s post-update analysis, legitimate programmatic pages built on unique, structured data — local business directories with verified listings, comparison tools with live pricing, travel guides with real inventory data — continued to rank and in some cases gained positions abandoned by penalized competitors. This is the crucial insight for 2026: the template is still legal; the absence of unique data is not.

The AI SEO software market is projected to reach $4.97 billion by 2033 — and a significant portion of that growth is driven by pSEO platforms that incorporate data differentiation by design. Our analysis of publishing 100 articles per month shows that data-first pSEO pages consistently outperform template-first approaches in both ranking speed and ranking duration.

What Still Works: Data-Differentiated Programmatic SEO

Successful programmatic SEO in 2026 has four defining characteristics that distinguish it from the scaled content abuse Google now penalizes:

- Unique data per page: Every page contains at least one data element — price, rating, location, specification, review — that cannot be replicated by a competitor without the same data source.

- Searcher intent matching: Each page targets a specific, navigational or transactional intent, not a head keyword with vague informational intent.

- Quality floor enforcement: Pages below a defined content quality threshold are either enriched before indexation or blocked via robots.txt.

- Canonical architecture: Thin or near-duplicate pages consolidate link equity to their canonical counterparts, preventing index bloat.

The statistical foundation of pSEO remains intact: approximately 92.42% of all search queries have fewer than 10 monthly searches, establishing the statistical basis for long-tail keyword targeting at scale. A single page targeting one of these queries may generate only 3–5 monthly visits — but 10,000 such pages generate 30,000–50,000 monthly visits from queries no competitor is actively targeting. Building and maintaining that infrastructure efficiently requires the kind of SEO automation infrastructure that was unavailable to most teams just two years ago.

Step 1: Keyword Research at Scale

Programmatic keyword research starts with identifying “head terms” and “modifiers” — the combinatorial building blocks that generate thousands of keyword variations from a small seed set. The goal is to generate 1,000–100,000+ keyword variations that share a consistent intent and can be served by a consistent page template.

Finding Head Terms

Head terms define the product, location, or comparison category your pSEO targets. Examples: “[software] alternative,” “[city] [service] cost,” “[product A] vs [product B].” Each head term should represent a distinct searcher intent that your data source can satisfy.

Finding Modifiers

Modifiers are the variables that differentiate individual pages: city names, product names, competitor names, features, price tiers, or industry verticals. Modifiers should come from your data source — if you have 500 verified city listings, you have 500 valid city modifiers. Never generate modifiers without a corresponding data source entry.

Keyword Qualification Criteria

- Monthly search volume: 10–10,000 (long-tail sweet spot; avoid head terms above 10,000 for pSEO)

- Keyword difficulty: under 35 (pSEO pages rarely earn enough backlinks to compete for difficult terms)

- CPC above $0: commercial intent proxy

- SERP includes at least two results of the same content type as your template

Step 2: Data Sourcing and Structuring

The data source is the most critical decision in pSEO architecture. It determines both the differentiation quality of your pages and the scale ceiling of your program. The four most commonly used data source types in 2026 are:

1. Internal Product or Inventory Data

The cleanest pSEO data source — you own it, it’s unique, and it’s verifiable. E-commerce sites, SaaS platforms, and marketplaces can generate one page per product, plan tier, integration, or template. This is the Zapier and Canva model: every integration or template becomes a landing page.

2. Third-Party APIs with Unique Data

APIs from property registries, business directories, government databases, or financial data providers supply unique, verifiable data that can differentiate pages at scale. The data must be licensed for commercial republication. Examples: MLS real estate data, Companies House records, weather APIs for location-specific pages.

3. User-Generated Content (UGC)

Review platforms, Q&A communities, and listing directories can serve as pSEO data sources if the UGC is genuine and diverse enough to differentiate pages. TripAdvisor’s 226+ million monthly visitors are driven almost entirely by UGC-differentiated pages.

4. Structured Research Data

Primary research — surveys, benchmarks, price trackers — produces the most citation-worthy pSEO pages. A salary comparison page built from 10,000 survey responses is differentiated in a way that no competitor can replicate without conducting the same research. This aligns perfectly with Google’s E-E-A-T requirements and AEO citation patterns, as we document in our content velocity compounding research.

Step 3: Template Architecture

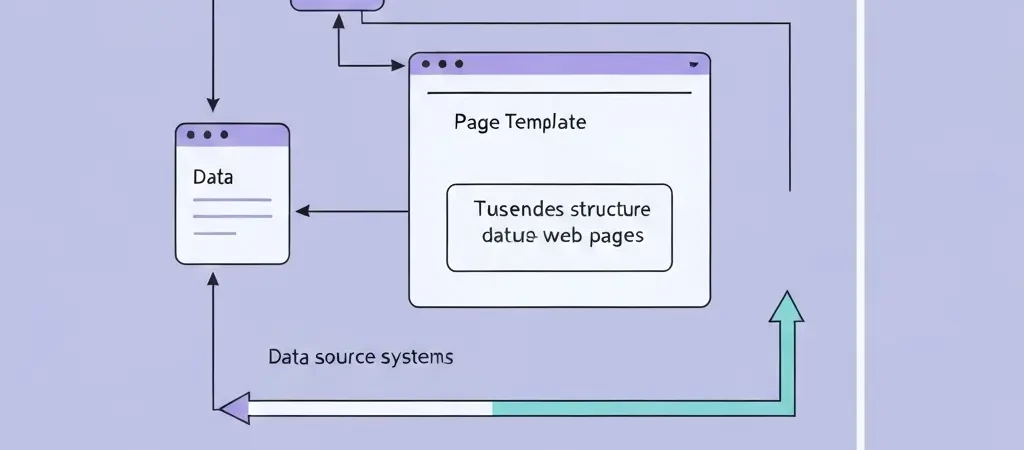

Template architecture separates static elements (consistent across all pages) from dynamic placeholders (populated from the data source). A well-designed pSEO template has three zones:

Static Zone (30–40% of page)

Navigation, header, footer, legal disclaimers, site-wide CTAs, and the structural HTML that is identical across all pages. This zone should be crawled efficiently; Google’s budget allocation does not penalize identical static zones across large page sets.

Dynamic Zone (50–60% of page)

The core content area, populated with unique data from the data source. This zone must contain substantively different content for every page to avoid the scaled content abuse classification. Minimum differentiation requirements in 2026: unique headline, unique introductory paragraph, at least three data-specific facts not present on other pages, and at least one data table or structured element unique to that page.

Contextual Zone (10–20% of page)

Internal links to related pages, related category navigation, and dynamic FAQ sections generated from data-specific queries. This zone is where pSEO pages build topical depth and pass link equity across the cluster. See our guide on building topical authority with AI content for the internal linking patterns that work best at pSEO scale.

Step 4: Quality Gates and Content Scoring

Quality gates are automated checks that prevent below-threshold pages from being indexed. In 2026, a minimum three-gate system is recommended before any pSEO page goes live:

- Uniqueness gate: Automated near-duplicate detection — pages with more than 70% text overlap with any other indexed page are held for enrichment.

- Content completeness gate: Every required dynamic field must be populated; pages with empty data placeholders are blocked from indexation.

- Minimum word count gate: 300 words minimum for supporting pages, 600 for cluster-level pSEO pages. Pages below threshold are auto-enriched with contextual paragraphs before indexation.

Successful programmatic SEO campaigns — defined as campaigns where 40–60% of published pages earn at least some organic traffic — universally apply quality gates before launch. Campaigns without quality gates typically see fewer than 10% of pages earn any traffic, with the remainder contributing to index bloat that degrades the domain’s overall crawl budget allocation.

Step 5: Publishing Pipeline and Indexation

The publishing pipeline determines how quickly pages move from data source to indexed URL. In 2026, the optimal pSEO publishing cadence follows these principles:

- Never push 100,000 URLs live overnight. Roll out in staged batches of 500–5,000 pages, monitoring crawl coverage and indexation rates before expanding.

- Use a dedicated sitemap for pSEO pages. A separate sitemap.xml for programmatic pages makes it easy to monitor indexation rates and identify crawl coverage issues.

- Submit to Google Search Console immediately. Manual URL inspection for the first batch of pages accelerates initial indexation and surfaces any coverage errors before bulk launch.

- Implement internal linking from day one. Pages that receive at least one internal link from an established page are indexed significantly faster than orphan pages.

The WordPress auto-publishing setup guide covers the specific plugin and API configurations needed to execute a staged pSEO rollout on WordPress without manual intervention at each step.

Step 6: Monitoring and Performance Triage

Programmatic SEO requires a monitoring system distinct from standard SEO reporting. At pSEO scale, individual page-level analysis is impractical — you need cohort-level performance triage:

- Indexation cohort tracking: Group pages by launch date and track what percentage of each cohort is indexed within 7, 14, and 30 days.

- Traffic cohort analysis: Of indexed pages, what percentage earn at least 1 monthly visit? Target: 40–60%. Below 30%: trigger quality gate audit.

- Cannibalisation monitoring: Use Google Search Console to identify pages competing for the same queries and consolidate with canonical tags or 301 redirects.

- Freshness rotation: Schedule quarterly data refreshes for all pSEO pages to maintain content freshness signals. Pages with unchanged data for 180+ days see measurable ranking degradation.

Real-World pSEO Case Studies

Zapier: 800,000 Pages, 5.8M Monthly Visitors

Zapier’s pSEO program is the most-cited example in the industry for good reason. Each of Zapier’s approximately 800,000 integration pages targets a specific “[App A] + [App B] integration” query, populated with unique integration documentation, step-by-step setup instructions, and workflow templates specific to that combination. No two pages share the same content. Result: over 5.8 million monthly organic visitors, the majority from long-tail integration queries with near-zero competition.

Canva: Millions of Template Pages

Canva generates unique pages for every template in their library, targeting queries like “[style] [format] template free.” Each page showcases the actual template, includes unique design specifications, and provides a direct-to-editor CTA. The uniqueness signal comes from the template visual itself — no two pages share the same image asset. Canva’s pSEO program is responsible for a substantial portion of their estimated 100+ million monthly organic visitors.

Wise: 60M+ Monthly Visits from Currency Pages

Wise builds currency conversion pages for every currency pair — “[Currency A] to [Currency B] converter” — populated with live exchange rate data from their own infrastructure. The data is real-time, unique, and directly serves the searcher’s intent. No competitor can replicate the page without the same live data feed. This is the gold standard for data-differentiated pSEO.

pSEO Data Summary: Key Statistics for 2026

Programmatic SEO by the Numbers — 2026

| 92.42% of queries | Have fewer than 10 monthly searches — the statistical foundation of long-tail pSEO |

| 40–60% | Of pSEO pages earn organic traffic in successful campaigns (with quality gates) |

| 60–90% | Ranking loss for sites hit by Google’s March 2026 scaled content abuse penalty |

| +22% | Visibility increase for sites with original data after March 2026 update |

| 800,000 pages | Zapier’s pSEO page count; generates 5.8M+ monthly organic visitors |

| $4.97 billion | Projected AI SEO software market size by 2033 (CAGR: 19.6%) |

Sources: Search Engine Land, Digital Applied, Ahrefs, Metaflow AI, Jasmine Directory (2025–2026)

Video: SEO Foundation for Programmatic Strategies

The following Ahrefs tutorial covers the SEO fundamentals — keyword research, on-page optimization, and content quality — that are the prerequisite for any programmatic SEO program in 2026.

Frequently Asked Questions About Programmatic SEO

What is programmatic SEO?

Programmatic SEO is the automated or semi-automated creation of large numbers of keyword-targeted web pages using templates and structured data. Instead of writing each page individually, teams design a reusable page template with dynamic placeholders, then populate it with data from a structured source to generate thousands of unique, crawlable pages that each target a specific long-tail keyword.

Is programmatic SEO still safe after Google’s March 2026 update?

Yes, programmatic SEO is still safe after the March 2026 update — but only when pages are differentiated by genuine, unique data. Sites that used pSEO to generate thousands of near-identical keyword-swapped pages suffered penalties of 60–90% ranking loss. Sites with real data differentiation — unique pricing, real inventory, live data feeds — saw visibility increases of approximately 22% as penalized competitors lost their positions.

How many pages should I start with for programmatic SEO?

Start with 500–2,000 pages in your first programmatic SEO batch. Monitor indexation rates and traffic performance for 30–60 days before scaling. If 40%+ of your first batch earns organic traffic, the template and data source are validated for expansion. If fewer than 20% earn traffic, audit the quality gates and differentiation before scaling further.

What are the best tools for programmatic SEO in 2026?

The most effective pSEO tool stack in 2026 includes: Ahrefs or Semrush for keyword research and SERP analysis; a headless CMS (Webflow, Contentful) or WordPress with custom post types for template management; a data pipeline tool (Whalesync, Make, or n8n) for populating templates from data sources; and an automation platform like Authenova for scheduling and publishing management. Google Search Console is essential for indexation monitoring.

How does programmatic SEO differ from content at scale?

Programmatic SEO uses templates and structured data to generate pages automatically. Content at scale uses AI writing tools to produce individual articles faster. The key difference is the uniqueness source: pSEO uniqueness comes from data differentiation; content-at-scale uniqueness comes from AI-generated prose. Both strategies can coexist — programmatic pages for transactional long-tail queries, AI-written articles for informational cluster content.

How long does it take for programmatic SEO pages to rank?

Programmatic SEO pages targeting low-competition long-tail keywords (difficulty under 20) typically appear in Google’s top 100 within 14–30 days of indexation, and in the top 20 within 60–90 days — provided they have at least one internal link from an established page. Higher-difficulty terms may take 6–12 months regardless of page quality. About 71% of new pSEO pages are indexed within the first 36 days when submitted via sitemap.

Automate Your Programmatic SEO Publishing Pipeline

Authenova handles the publishing, scheduling, and freshness rotation your programmatic SEO program needs — without manual intervention at each step. Connect your data source, configure your template strategy, and scale to thousands of pages.